The week before last was a terrible week for me. It was one week after I had published my books. I was looking to take some time off from updating the books. After about 6 months being self-employed, doing the things I love to do, I felt it was time for me to return to the workforce. Let’s face it, it’s not easy to be self employed and get a steady paycheck. So I started looking for jobs.

All was well. I had applied to a number of jobs that I was interested in. By the end of the week however, I had nothing – nobody called back. Naturally, coming off the high of having just published a couple of books, it was crushing.

Remember a few months ago, I was mulling over acquiring a tablet? Out of sheer coincidence, I came into posession of a Nexus 10 a few days after I blogged that entry. It’s an older model, but hey, beggars can’t be choosers. Despite coming to possession of the tablet, I never really used it.

Anyway, back to the week before last. Combined with the fact that I got rejected for those jobs that I wanted plus a few more not so nice news, I was feeling pretty shitty about myself. So on Friday evening, I altered my state of mind chemically to relax a little.

After some drinks, I took out my tablet and fiddled with it while relaxing with pineapples. I decided to download my favourite game on tablets since 2011 – Jetpack Joyride. Now, when your brain is under the influence, time seems to slow down – your body appears to lag. Specifically my eyeballs felt like they were lagging. I kept looking at the right of the screen, and I could feel my eyes darting to look at the right and back to Barry on a very regular basis.

This led me to ask a question: what does Jetpack Joyride look like when one’s eyes are tracked? What would a heatmap look like? Clearly there are eye tracking devices out there like the EyeTribe or Tobii which is fantastic. But I didn’t have access to any of those. The front-facing camera of my tablet appeared to frown at me. Then it hit me: why not use it to do eye tracking?

So I dragged myself to the computer, and started learning how to write Android apps. To their credit, the Android developer page is absolutely easy to use – if an intoxicated person can read and create an app in about an hour, you know it’s bloody good documentation. I didn’t get far, except to capture videos and detect my face, which is easy stuff anyone can do. I went to bed.

Building The App

The next morning, I woke up, clear in the head, and decided to actually make it happen. I had a lot to figure out – I had long returned my optics knowledge to my physics lecturers. Even worse was geometry – I was never particularly good at that branch of math – I’d rather work with PDEs and martingales than work with geometry. Nonetheless, these are important in figuring out how it worked.

The app is broken down into two parts: The Java bits, and the JNI bits. I originally started off using the Java version of OpenCV on Android. That was a mistake. Everything was so slow. After about 2 days of dicking around with the Java versions, I decided to learn how to write JNI apps instead. It was coincidental that I had prior to this, a consult that required me to write some C++ code – so I was not as rusty I had expected.

How the app worked was simple:

- Find eye/eyes on the face.

- Find the center of the eyes. I used Fabian Timm’s algorithm, and quickly ran into some problems. Googling around, I ended up with Tristan Hume’s correction/clarification.

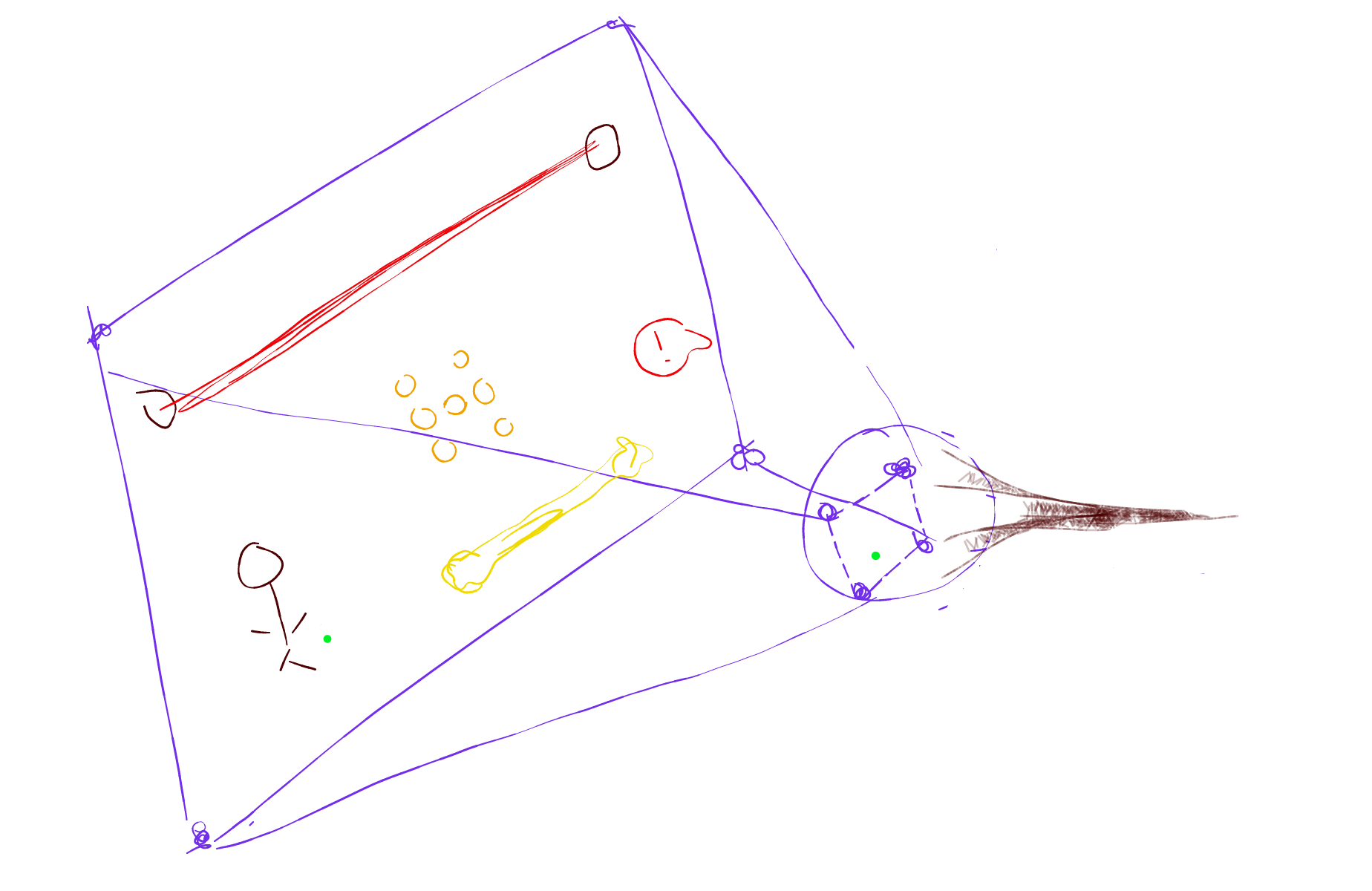

- The screen has 4 buttons on each corner of the screen. The idea is that your eyes follow where your hand goes. So if your finger is at the top right (tapping the top right button), that should create a point in space that could be recorded as top-right. The theory behind is that after tapping all 4 corners of the screen, we’d have 4 eye center points for each eye. If you draw lines to connect the 4 eye center points, you’d get small rectangle. That rectangle is a scaled version of the screen.Perhaps this sketch will help better:

- Corrections to head movements are added

- Gaze points are then inferred from scalling the current points.

- If there are more than one eye detected, then two eyes will have to have a consensus on what the mutual point is

Sounds simple enough. And it was simple enough that it worked. There were a few flaws. For example, my head had to be very still. My code for correcting the movements were not good enough – small movements in my head led to far off estimates. So I delved in deeper. Turns out there were a lot of things I didn’t know about geometry and optics.

My assumptions were wrong. You see, the camera is at the top of the Nexus 10, but the screen that I am looking at is below the camera. When you learn optics in high school physics, one of the first things you learn is the optical axis. The eyes I was tracking? They’re wayyyyyy off axis. And to correct for those I needed some geometry skills.

I’m absolutely terrible at geometry and when I went to learn more about geometry, I had a very hard time – something something brain plasticity something something age – I don’t learn stuff as quickly as I did when I was younger. From relearning the basics of optics and geometry, I relearned projections. All of a sudden I realized that the projection of a circle in 3D space into a 2D space would yield a 2D ellipse. I had hit jackpot. I had just discovered a way to correct my gaze points. You see, the iris of the eye almost always renders as a ellipse on screen, and if I could reverse it, it would be useful.

If that could be used to calibrate the system… As I worked more on it, I began wondering about using it to map to a gaze point. Combining reversal of a ellipse into a circle in 3D space, the system began to perform much better.

I found that writing for Android to be exceedingly tedious, even with C++. Particularly testing ideas with it. So eventually I settled with writing and testing my algorithms with Python, and when I am satisfied with them, I write them in C++. Along the way, I had to write my own ellipse fitting algorithm based on Ahuja, Nguyen and Wu (2010) because fitEllipse on contours just wouldn’t cut it.

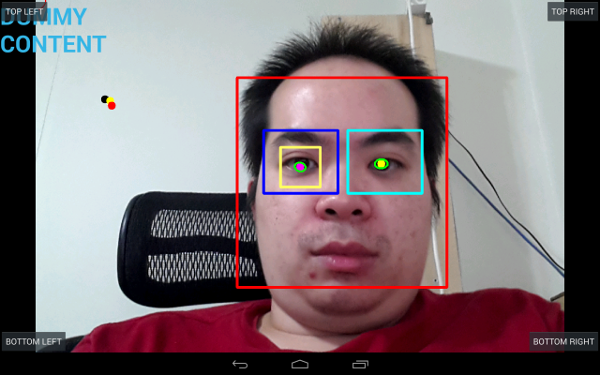

I spent the rest of remaining 2 weeks working on it, on and off between my consults. And eventually a system developed where I could track the position of my eye and guess my gaze quite well. This is how the app looks like (in the calibration Activity):

The four coloured dots (red, yellow, white and black) are the predicted gaze spots I was looking at, pre-scaled. They’re scaled out, and a vote/consensus algorithm estimates where I was actually looking at. This picture is pretty accurate. But sometimes it can be totally inaccurate.

The app generates a heatmap on the onDestroy() method call and puts it in storage. To make the heatmap, I used Lucas Beyer’s extremely extremely excellent heatmap library. Which… is what you probably are here to see anyway.

Testing the System

Along the way, I tested the system – first with games, then with websites that I read on a regular basis. I started with games, because that was what I had originally envisioned the project for: I had wondered where my eyes were looking at while playing Jetpack Joyride.

Now, my app only generates heatmaps on a transparent PNG. For the pictures of the games below, I started the app, played the game for a bit, and then pause the game, stop the app to generate the heatmap, and then switch back to the game to take a screenshot. I then combined the heatmap with the screenshot with GIMP, applying the appropriate scale factors, which is reported by the app as well.

Jetpack Joyride

There is one word to describe Jetpack Joyride: joyful. I’ve always liked Jetpack Joyride. Though if you think about it, the in-game scientists work in a very fucked up place. Why would there be random zappers that the scientists can just walk into? I’ll let the missles slide, but the laser beam and the zappers? What kind of work place is that? And of course there is also the change of environments – I mean, there are underground caverns with molten lava that the scientists work at. Messed up.

Then of course, there is Barry, who supposedly wanted a MACHINE GUN jetpack to do good. Yeah right, Barry. Also, why can’t Barry get an armour made entirely of whateve Flash (the dog) is made of? Flash can walk into zappers, missles and laser beams without harm.

Anyway, here’s what my gaze patterns look like when I play Jetpack Joyride:

It turns out I look at Barry a little way too often. To quote cfgt, “no wonder you are so terrible at Jetpack Joyride” (The furthest run I have ever done is 5141m). I later got an earful about how to play Guitar Hero as well – apparently you’re supposed to hit the notes according to the music, which isn’t entirely helpful to me since I don’t listen to those musics outside playing GH or Rock Band. I mean, if they had a game called Symphony Orchestra, I probably can play the violin and piano parts well…

Smash Hit

Looking for another game to play and help keep my mind blank while figuring out the geometry of the eye and camera, I stumbled upon Smash Hit by Mediocre Games. Well not so much stumbled as it was listed in Google Play’s #1 Featured Games optimized for tablets.

It is a fun game, where you shoot metal balls at glass pyramids to gain more metal balls and at glass panels to avoid getting hit. If you get hit you lose 10 balls. Again, the objective is distance travelled, except this time it’s from a first person view.

Here’s where my eyes looked at:

As can be noted, I’m mostly focused on the center (or close to it – the tracker isn’t quite accurate yet as I have some other things to figure out). I’m again probably playing this wrong, but who cares.

Dungeon Keeper

As a child, I loved playing Dungeon Keeper – the game’s tagline was “It’s good to be bad”. It was also one of the games that put me in a battle of wits against my dad. I played quite a bit of the game that it had affected my school (not that it ever mattered), and my dad uninstalled the game. And thus began a series of installing-in-more-obscure-locations by me and a uninstalling by my dad. Ah, fun times.

Roused by nostalgia, I downloaded Dungeon Keeper on the tablet for a play. It was the most disappointing experience I have had so far. It’s pretty much Farmville with a DK skin over it. It was a terrible terrible terrible game. Don’t ever play it. Everything is optimized towards the player spending real money on gems. The game imposes stupid build times, and encourages the user to spend gems to rush it. It’s these sorts of nonsensical things that is ruining gameplay in today’s gaming industry. Absolutely disgusting.

Any how, I DID play a few hours/days worth of game (AND I DIDN’T SPEND A SINGLE FUCKING CENT, EA GAMES!). Here’s how a session looked like:

I think it’s a bit sad that I spent a lot more time looking at the home/switch app button than other spots in the game. Because that’s all you can do really, if you don’t want to pay money for the in app purchases. You set a few things to do, and then come back in a few days to check on the progress. Or if you have push notification turned on, the app will remind you to check back with it.

There is not one little bit in that game that is worth playing. Shame. I loved Dungeon Keeper. EA just took a brand and destroyed it.

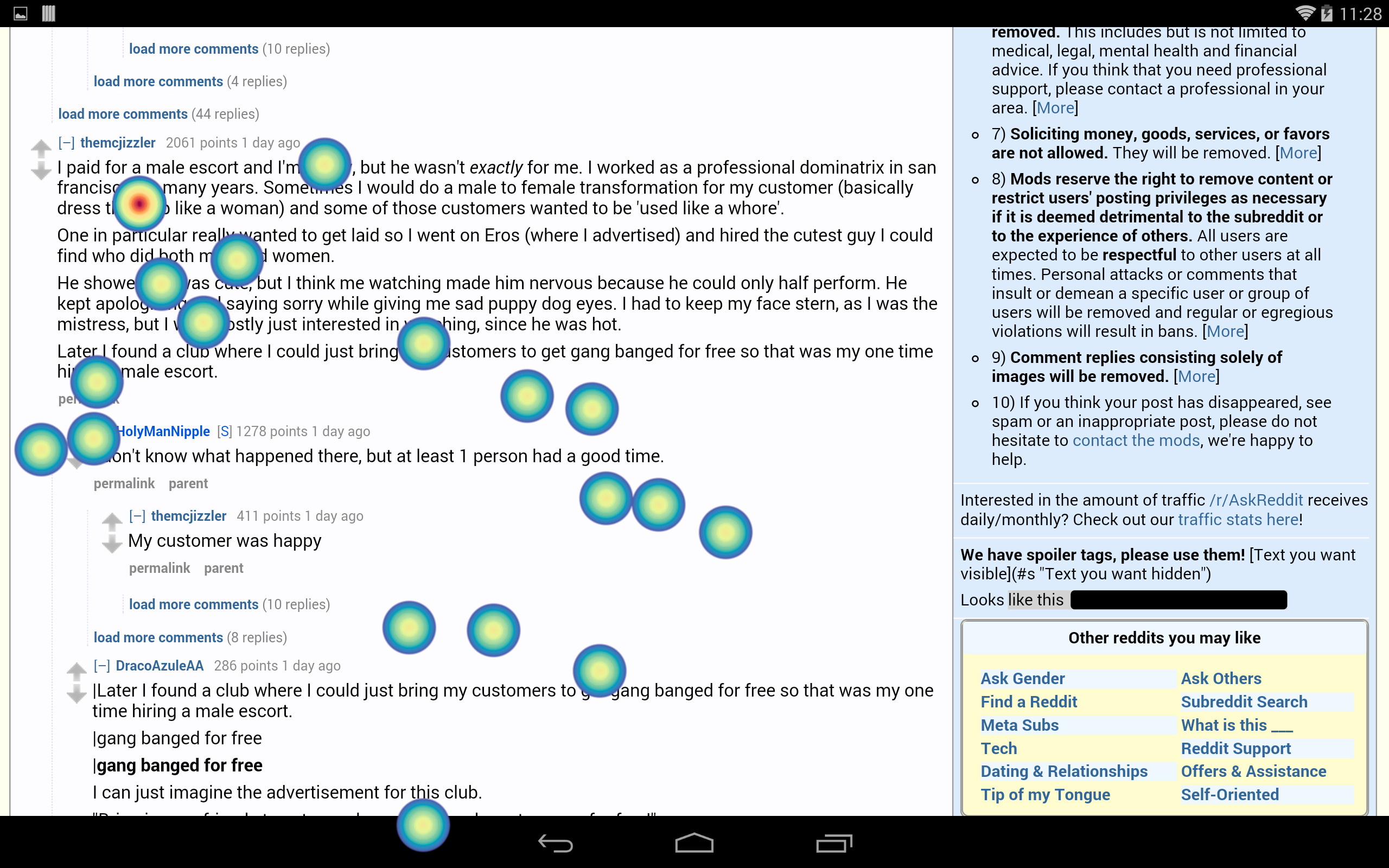

I also checked out reddit with the eye tracking app. This was after I had added additional code to take care of my glasses. Took about 5 tries to take this. I read a new comment thread each take.

My general impression with a number of recording sessions with reddit is that I skim way less than I expect to – the dwell time on reddit comments is nearly as long as the average dwell time on the text of Paul Krugman’s Op Ed in NYT. I also happen to waste a lot of time on reddit according to my RescueTime, so I should probably stop doing so.

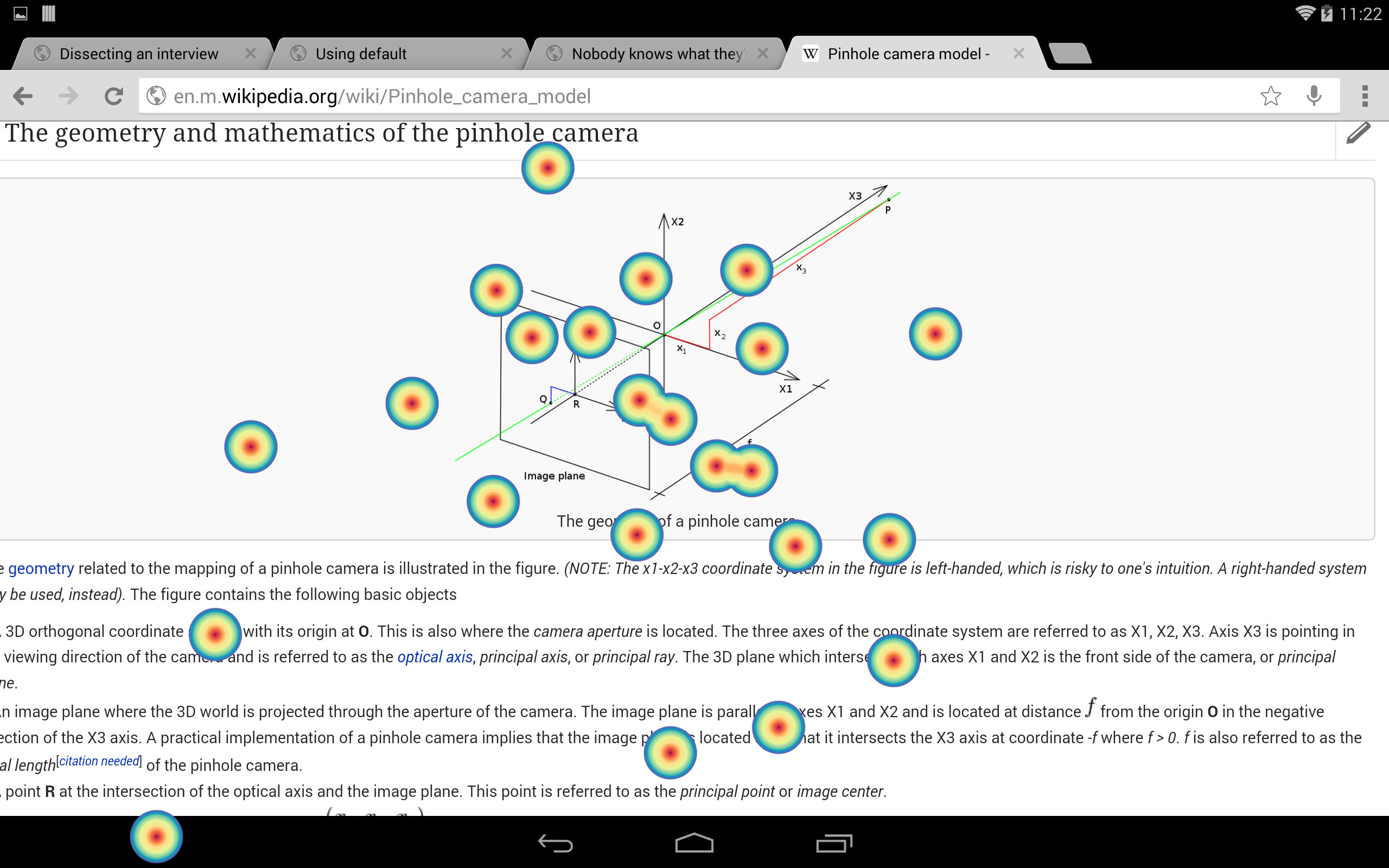

Wikipedia

One of the things that I had to learn while writing this app was the pinhole camera model. So I thought, hey, why not do some tracking analysis on my eyes while I re-read the page. This one was a bit hard. The debug photos showed something I hadn’t realized happened when I was reading the page – I furrow my face quite a bit. I also subconsciously look down a lot to take notes or doodle on a piece of paper while reading, and so it kinda messed up with the analytics – since I had made it such that when the app lost track of my eyes, it would use the previous position as its current position until the eyes were found again – basically caching to overcome the problem of micro saccades.

Anyhow, here’s how it looks like when I read Wikipedia

Accidentally Racist

There are a great number of flaws in the app. For one, the reason why I had to switch in and out of the app was because after about 1 minute, the app would crash (therefore I was able to reliably use onDestroy()). It would also cause the tablet to heat up real quick as it would consume a lot of resources – though with Dungeon Keeper, DK alone too heated up the tablet. The usable framerate captured too was poor, therefore there were only very few points captured – about 1 per second. Error correction of the estimation was also not really up to scratch. I could probably do with some more work on that. Also, it’s not as rotationally invariant as I want it to, nor is it as scale invariant as I expect it to be. In fact it is quite sensitive to scale, so a lot more tweaking needs to be done.

It too only works when the Nexus 10 is on landscape mode. I tried working with portrait mode, but because the camera would be at the side, the angles were all wrong. There would probably be some correction algorithm which I have yet to learn, so there is room for improvement there.

But the most hilarious part of the app is that it’s accidentally racist. As you can see from the picture above, I’m of Asian descent. My eyelids change form from time to time. Sometimes, especially when I’m tired, or when I had just awoken, my eyes look like this:

Completely normal looking eyes with double eyelids. But some other times, I suddenly appear to have gained an epicanthic fold and my eyes look like this:

Yes, I know, it just looks like I have opened my eyes bigger in the first picture. But not really. The crease only happens when I am sleepy or my eyes particularly sticky. Otherwise, it’s the regular Asian single eyelid thing. This is when the app would stop detecting my eyes. Well, at least now I know exactly why jozjozjoz’s camera was accidentarry lacist.

Also, because of the way I use to detect irises, the app wouldn’t work on my darker skinned housemate either. In fact, I tested my app’s face detection with several stock photos and it would only work well with fair skinned people with dark irises. The method I use to detect irises was to super blur the input image, so that only the difference between the sclera and the iris would be picked up. Other edgefinding algorithms were too expensive to be used on an ARM device.

If you had ever met me in real life (or attended a talk I occasionally give), you’d notice that I wear glasses. And yet the picture above had me without glasses. I discovered that the ViolaJones method of discovering eyes weren’t that great if you work dark rimmed glasses. But I was able to test app without wearing glasses. Surprisingly both Jetpack Joyride and Smash Hit didn’t require me to squint at all. I later added some code to deal with the dark rimmed glasses – it wasn’t very good code however – every analysis required 3 or 4 tries before it could complete. I realized much later that it was due to the reflections off my glasses which interferred with a lot of the analysis.

Also, since it was developed to be used in a typical office environment, any changes to lighting will severely distort the detection. Hence in the daylight when sunlight is streaming into the room, the app couldn’t be used. I recently added code to equalize the histogram of the input image, but I still haven’t figured out what to do with the specular highlights of the eye. See, the reason it worked is because I worked late into the night where my eyes would dry out, leaving minimal amounts of specular highlights.

Feeling Better(?)

I thought writing this app would make me feel better about myself. It didn’t really. I still feel all sorts of shitty. On the plus side, I learned a few new skills, though as usual, they’re not very applicable skills in the job market. Oh sure, writing mobile apps is a Big Deal right now, but it’s not an end to me. I don’t view software development as a core activity that I should be doing, rather, a by-product of getting a job done. I just happen to like writing programs. That’s the kind of roles I’m looking for, and those are hard to come by. And yet when I applied, nobody replied. It’s really quite frustrating.

You know what did work though? I took up running again, and I started feeling better again. It did feel strange though, driving to the bay for a run. Imagine what people from ancient times would say: you… take an iron horse so that you could go for a run? You run for pleasure??!

Spin Off

This was initially a private blog post but I had published it to the wrong blog by accident. It was up for about an hour before I realized it and took it down. A friend of mine saw this and asked if the eye tracking app will be available on Google Play store. I then showed off the app to a number of other people, and the response was similar. However, nobody would give me a price they’re willing to pay for. After conferring with my startup cofounders, we’ve decided to make it a service, if the demand is high enough.

And so after editing a lot of this post and removing a lot of whinges regarding job searching, I would like to introduce eyemap.io – Gaze Analytics For the Rest Of Us.

There is a business case to be made. Gaze tracking set ups are expensive. Pressyo can provide them in a cheap way. But before we can even decide to make this hack of a code into a proper business, we’d like to survey to see if anyone is really interested and is willing to pay for such a system.

And so we hastily threw up a website. Now go tell us if you’re interested in the service, and how much you would pay for it. We ask for your credit card details because we only want serious replies. They won’t be charged until we determine that there is sufficient demand.

Fun Facts About Gaze Tracking

After writing this app, these are some of the fun facts I learned:

- The eye moves a lot. I tried not only with myself, but with other people in my household as well. Everyone’s eyes move a lot. So damn much. Micro saccades are real. They just can’t stop moving. These were even captured on a relatively low frame rate camera, so there.

- I suddenly understand the power of Google Authorship in terms of SEO. This one caught me by surprise when I was tracking myself read Paul Krugman’s article. According to the gaze analytics, I dwell on faces for longer than normal. I repeated this experiment with my housemates. Everyone seem to have longer-than-normal dwell times on faces. A bit nuts, really. I didn’t expect myself to fall for such tricks, and yet I do. Scumbag brain.

- Ad blindness. I literally ignore the right and left sidebars of websites which have ads. I know that when I was working in online advertising, I would have to force myself to find the ads and look at them. Naturally, I literally don’t look at the sidebars.

- Content scanning. Traditional wisdom says we scan content in an F-shaped way. From my analytics, we appear to scan content in a F-shaped way but in back and forth. It’s quite involuntary. I’ve tried stilling my eyes, to no avail.

- Pictures work. This somehow depresses me more than I expected. Pictures really draw attention away from text. Which is weird, since I love long form content. I like promoting long form content. But sites like Buzzfeed work because pictures drag my eyes attention towards them. And this is why it’s depressing – everyone will be optimizing to the lowest common denominator and soon we won’t have good long form article sites again.

TL;DR

I got a bit sad after being rejected for a couple of jobs that I wanted, so I decided to learn Android programming to write an app to track my gaze. I did, and after some consulting with my startup cofounders, I would like to introduce eyemap.io – Gaze Analytics For the Rest Of Us.